How NLTK Discovers Third Party Software

NLTK finds third party software through environment variables or via path arguments through api calls. This page will list installation instructions & their associated environment variables.

Java

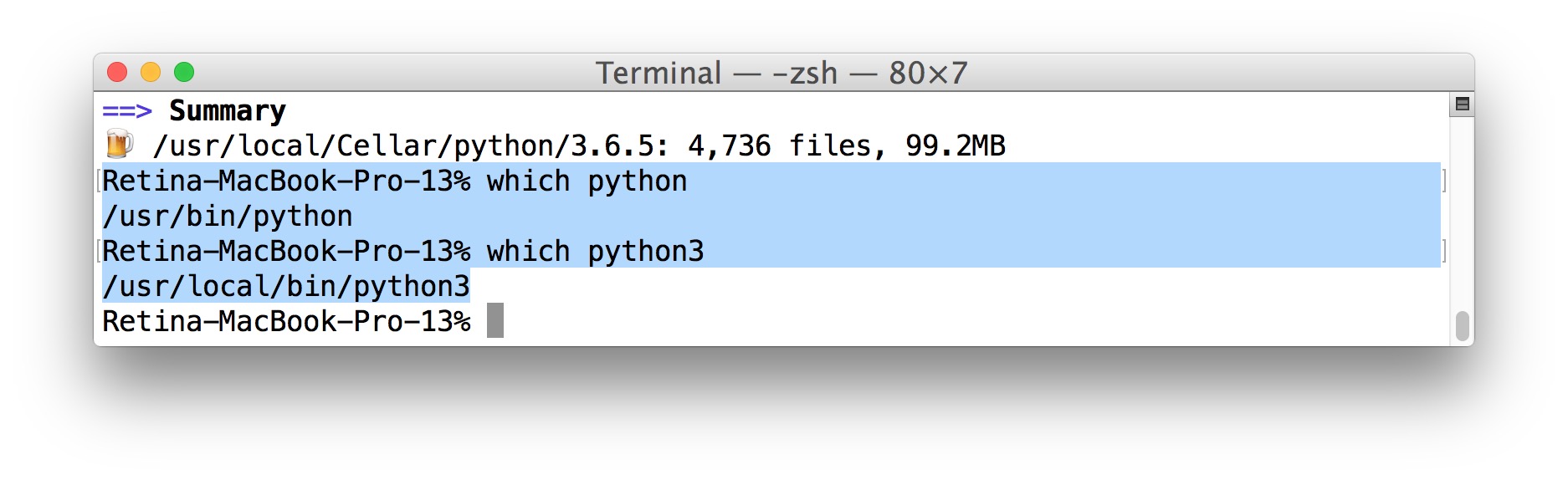

Serious n00b. NLTK in python 2.7 and 3.5. Pythlang Silly Frenchman. Posts: 30 Threads: 3. How do I use NLTK on Python 3.5? I've tried installing on 2.7 and I get permission errors. Did my ineptitude in creating a virtualenv somehow fail and did I screw up bad? I'm using a Mac with OS X Sierra and have successfully, as far as I know. Nov 19, 2015 - Some users may need to install pip (python package manager) before proceeding $ sudo easy_install pip Install nltk (natural language toolkit).

Java is not required by nltk, however some third party software may be dependent on it. NLTK finds the java binary via the system

PATH environment variable, or through JAVAHOME or JAVA_HOME.To search for java binaries (jar files), nltk checks the java

CLASSPATH variable, however there are usually independent environment variables which are also searched for each dependency individually.Windows

- Download & Install the jdk on java's official website: http://www.oracle.com/technetwork/java/javase/downloads/index.html?ssSourceSiteId=otnjp

Linux

It is best to use the package manager to install java.

Stanford Tagger, NER, Tokenizer and Parser.

To install:

- Make sure java is installed (version 1.8+)

- Download & extract the stanford NER package http://nlp.stanford.edu/software/CRF-NER.shtml

- Download & extract the stanford POS tagger package http://nlp.stanford.edu/software/tagger.shtml

- Download & extract the stanford Parser package: http://nlp.stanford.edu/software/lex-parser.shtml

- Add the directories containing

stanford-postagger.jar,stanford-ner.jarandstanford-parser.jarto theCLASSPATHenvironment variable - Point the

STANFORD_MODELSenvironment variable to the directory containing the stanford tokenizer models, stanford pos models, stanford ner models, stanford parser models e.g (arabic.tagger,arabic-train.tagger,chinese-distsim.tagger,stanford-parser-x.x.x-models.jar...) - e.g.

export STANFORD_MODELS=/usr/share/stanford-postagger-full-2015-01-30/models:/usr/share/stanford-ner-2015-04-20/classifier

Tadm (Toolkit for Advanced Discriminative Modeling)

To install

- Download & compile TADM: http://tadm.sourceforge.net/

- Set the environment variable

TADMto point to the tadm binaries directory.

Megam (MEGA Model Optimization Package)

To install

- Download & compile MEGAM's source: http://www.umiacs.umd.edu/~hal/megam/index.html

- Set the environment variable

MEGAMto point to the MEGAM directory. - If using macports version of ocaml, modify the MEGAM Makefile to specify the following:

WITHCLIBS =-I /opt/local/lib/ocaml/camlandWITHSTR =str.cma -cclib -lcamlstr

C&C Tools/Boxer

To install

- Checkout & compile the latest SVN revision http://svn.ask.it.usyd.edu.au/trac/candc/wiki/Subversion

- Set the environment variable

CANDCto point to the C&C directory.

Prover9 & Mace4

To install

- Download & extract Prover9 & Mace4: http://www.cs.unm.edu/~mccune/mace4/

- Set the environment variable

PROVER9to point to the binaries directory.

Malt Parser

To install

- Make sure java is installed

- Download & extract the Malt Parser: http://www.maltparser.org/download.html

- Set the environment variable

MALT_PARSERto point to the MaltParser directory, e.g./home/user/maltparser-1.8/in Linux. - When using a pre-trained model, set the environment variable

MALT_MODELto point to.mcofile, e.g.engmalt.linear-1.7.mcofrom http://www.maltparser.org/mco/mco.html.

Hunpos Tagger

To install

- Download & extract the hunpos tagger and a model file: https://code.google.com/p/hunpos/downloads/list

- Set the environment variable

HUNPOS_TAGGERto point to the directory containing thehunpos-tagbinary - NLTK also searches for the model files using the same environment variable, so you can put the model file in the same location (NB the model file path can also be passed to the

nltk.tag.hunpos.HunposTaggerclass via thepath_to_modelargument)

Senna for Various NLP Tasks

To install

- Download & extract the Senna files: http://ml.nec-labs.com/senna/

- Set the environment variable

SENNAto point to the senna directory. NLTK searches for the binary executable files via this environment variable, but the directory path can also be passed to thenltk.tag.senna.SennaTaggerclass via thesenna_pathargument.

CRFSuite for CRF Tagger

To install

- Download & compile : http://www.chokkan.org/software/crfsuite/

- Set the environment variable

CRFSUITEto point to the directory containingcrfsuite(for Linux) orcrfsuite.exefor Window. NLTK searches for the binary executable files via this environment variable, but the executable file path can also be passed to thenltk.tag.crfsuite.CRFTaggerclass via thefile_pathargument.

REPP Tokenizer

To install

- The installation instructions above is tested for Linux and Mac OS. For more information, see http://moin.delph-in.net/ReppTop

- After installing you can set the environment variable

REPP_TOKENIZERto point to the directory containing therepptokenizer, e.g. (/path/to/where/you/wanna/save/repp/), then you can instantiate the tokenizer object without specifying any parameter, e.g. (tokenizer = nltk.tokenize.ReppTokenizer()) - Also, you can directly create the

ReppTokenizerobject by passing in the directory containing therepptokenizer without setting the environment variable, i.e. (tokenizer = nltk.tokenize.ReppTokenizer(/path/to/where/you/wanna/save/repp))

If at the

./configure CPPFLAGS=-P step, it shows an error like this on Mac:Please install and link the ICU library (

brew install icu4c && brew link icu4c --force) and then retry from the ./configure CPPFLAGS=-P step. If for any reason, you need to unlink the icu4c, try: brew unlink icu4c.First, follow the installation instructions for Chocolatey.It’s a community system packager manager for Windows 7+. (It’s very much like Homebrew on OS X.)

Once done, installing Python 3 is very simple, because Chocolatey pushes Python 3 as the default.

Once you’ve run this command, you should be able to launch Python directly from to the console.(Chocolatey is fantastic and automatically adds Python to your path.)

Setuptools + Pip¶

The two most crucial third-party Python packages are setuptools and pip,which let you download, install and uninstall any compliant Python softwareproduct with a single command. It also enables you to add this network installationcapability to your own Python software with very little work.

All supported versions of Python 3 include pip, so just make sure it’s up to date:

Pipenv & Virtual Environments¶

The next step is to install Pipenv, so you can install dependencies and manage virtual environments.

A Virtual Environment is a tool to keep the dependencies required by different projectsin separate places, by creating virtual Python environments for them. It solves the“Project X depends on version 1.x but, Project Y needs 4.x” dilemma, and keepsyour global site-packages directory clean and manageable.

For example, you can work on a project which requires Django 2.0 while alsomaintaining a project which requires Django 1.8.

So, onward! To the Pipenv & Virtual Environments docs!

This page is a remixed version of another guide,which is available under the same license.